MediaWiki is a wiki web software using by all Wikimedia Foundation projects and many other wikis. This software was originally written for the development of the Wikipedia encyclopedia, but now it is using commercially for knowledge management and content management systems. This article will introduce you to Mediawiki and why you need it. If you need a cheap VPS server, check out the packages offered on the Eldernode website.

Table of Contents

Everything About MediaWiki

What is MediaWiki?

MediaWiki is an open-source and free wiki software and collaboration platform that allows you to create personal and customized wikis. This wiki software is licensed under the GPL and can be considered a type of content management system (CMS) that is written in PHP and usually uses a MySQL database. It has been localized in over 350 languages, and its reliability and robust feature set have led to a large and vibrant community of users and third-party developers.

MediaWiki Features

Here are some key features of MediaWiki:

– Powerful, Multilingual, Extensible, Customizable, and Reliable

– Easy installation

– Working on most Hardware/Software Combinations

– Scalable and Suitable for Both Small and Large Sites

– Available in 350 languages

Code Base and Practices of MediaWiki

In the rest of this article, we will explain to you the basis of the code and methods of MediaWiki.

Security

The core developers and code reviewers have implemented strict security rules because MediaWiki is a platform for popular sites like Wikipedia. MediaWiki provides developers with packages around HTML output and database lookups. This allows developers to easily write secure code and handle escaping. The developer uses the WebRequest class to sanitize user input. This class analyzes data passed in a URL or via a POSTed form. Also, it removes “magic quotes” and slashes, strips of illegal input characters, and normalizes Unicode sequences. To avoid Cross-site request forgery (CSRF), use tokens, and cross-site scripting (XSS) by validating inputs and escaping outputs, usually with PHP’s htmlspecialchars() function. Note that MediaWiki also uses an XHTML sanitizer with the Sanitizer class and database functions that prevent SQL injection.

Configuration

MediaWiki provides many configuration settings that are storing in PHP global variables and their default value is set in DefaultSettings.php. The system administrator can override them by editing LocalSettings.php. Prior to version 1.2, MediaWiki was overly dependent on global variables, including for configuration and context processing. Note that Globals create serious security implications with PHP’s register_globals function. Also, it limits the potential abstractions for configuration and makes it more difficult to optimize the setup process. Additionally, the shared configuration namespace with variables used for registration and object context can lead to potential conflicts. Remember that global configuration variables also make MediaWiki difficult to configure and maintain.

Database and Text Storage of MediaWiki

MediaWiki uses a relational database backend. MySQL is the default database management system (DBMS) for MediaWiki, which all Wikimedia sites use, but other DBMSs (such as PostgreSQL, Oracle, and SQLite) have community-supported implementations. You can choose a DBMS when installing MediaWiki. In addition, MediaWiki provides both a database abstraction and a query abstraction layer that simplifies database access for developers.

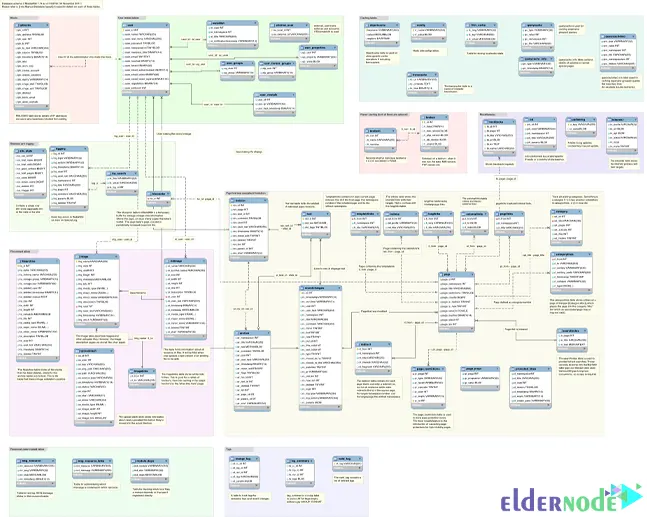

In the above layout, there are dozens of tables, many of which are about wiki content, such as page, revision, category, and recent changes. The following tables contain data about users (user, user_groups), media files (image, file archive), cache (objectcache, l10n_cache, querycache), and internal tools (a job for the job queue), among others. Note that complete database layout documentation is available on MediaWiki. MediaWiki uses indexes and summary tables extensively because SQL queries that scan large numbers of rows can be very expensive, especially on Wikimedia sites. Remember that unlisted queries are usually disallowed.

Requests, Caching and Delivery of MediaWiki

In the rest of this article, requests, storage and delivery of MediaWiki will explaine to you.

Workflow of Executing a Web Request

index.php is MediaWiki’s main entry point that handles most of the requests processed by the application’s servers. The code running from index.php performs security checks, loads the default configuration settings from includes/DefaultSettings.php, guesses the configuration with includes/Setup.php, and applies the site settings contained in LocalSettings.php. Then it instantiates a MediaWiki object ($mediawiki), and creates a Title object ($wgTitle) depending on the title and action parameters from the request.

Next, MediaWiki::performRequest() is calling to perform most of the URL requests. It checks for bad titles, read restrictions, local interwiki redirects, and redirect loops and determines if the request is for a normal or a special page.

Normal page requests are handed to MediaWiki::initializeArticle() to create an Article object for the page ($wgArticle), and also to MediaWiki::performAction(), which performs the “standard” actions. After the action completes, MediaWiki::finalCleanup() commit database transactions, output HTML and trigger pending updates via the job queue to finalize the request. MediaWiki::restInPeace() commits the deferred updates and closes the task.

If the requested page is a special software-related page such as Statistics, SpecialPageFactory::executePath is called instead of initializeArticle(), and then the corresponding PHP script is called. Special pages contain a variety of reports (recent changes, logs, uncategorized pages) and wiki administration tools (user blocks, user rights changes). Remember that their execution workflow depends on their function.

Many functions have profiling code that allows you to follow the execution workflow for debugging if profiling is enabled. You can do profiling by calling the wfProfileIn and wfProfileOut functions to start and stop profiling a function, respectively. Note that both functions take the function name as a parameter. MediaWiki sends UDP packets to a central server that collects them and produces profile data.

Caching

The first level of caching consists of reverse caching proxies (Squids) that intercept and serve most requests before they make it to the MediaWiki application servers. Squids contain static versions of entire rendered pages that allow users who are not logged in to the site read simply. MediaWiki supports Squid and Varnish natively and integrates with this caching layer. Note that Squid forwards the requests to the web server (Apache) for logged-in users and requests that can’t be served by Squids.

When MediaWiki renders and assembles the page from multiple objects, the second level of caching occurs. You can cache many of them to minimize future calls. The objects we mentioned include the page interface (sidebar, menus, user interface text) and the appropriate content, parsed from the wiki text. In the initial version 1.1 (2003) in MediaWiki an in-memory object cache was available. This is important to avoid re-parsing long and complex pages.

Login session data can also be stored in memcached. This allowed sessions to run transparently across multiple front-end web servers in a load-balanced setup.

The PHP Opcode cache is the last caching layer that is usually enabling to speed up PHP applications. The compilation can be a long process. If you use a PHP accelerator to store the compiled opcode and run it directly without compilation, you can avoid compiling the PHP scripts every time they are invoking. MediaWiki will “only” work with many accelerators such as APC, PHP accelerator, and eAccelerator. It will just work with many accelerators such as APC, PHP accelerator, and eAccelerator.

MediaWiki is optimized for this complete, layered, distributed caching infrastructure due to its Wikimedia bias. However, it natively supports alternative setups for smaller sites as well.

ResourceLoader

The interface of MediaWiki uses JavaScript, making it more interactive and responsive. ResourceLoader works by loading JS and CSS assets on demand, thus saving loading and parsing time when features are not being used. It also minifies code, groups resources to store requests, and can embed images as data URIs.

Conclusion

In this article, we introduced you to MediaWiki and why you need it. I hope you found this tutorial useful and that it helps you to find out why you need it. You can contact us in the Comments section if you have any questions or suggestions.